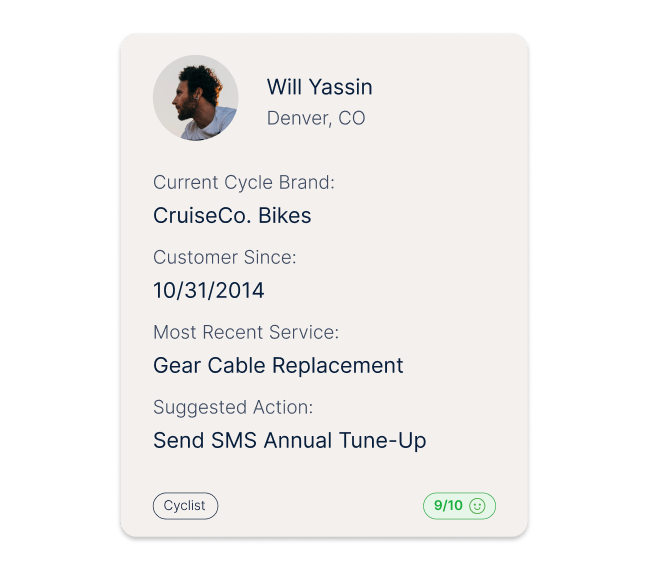

Uniquely theirs

Tailor each journey to the specific wants and needs of individuals through intelligent personalization tools and automations

The best brands in the world use Medallia.

More signals.

One customer truth.

Get a rich 360 degree view of customers with social media, transcripts, speech analytics, ticketing systems, and digital behavior which is now mission critical.

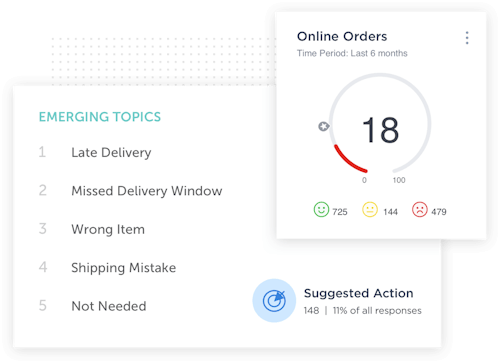

AI sees what you’d otherwise miss.

Medallia's AI and machine-learning engine is tailor made for experience data. Easily analyze structured and unstructured insights to uncover what people care about, prioritize actions and predict their behavior.

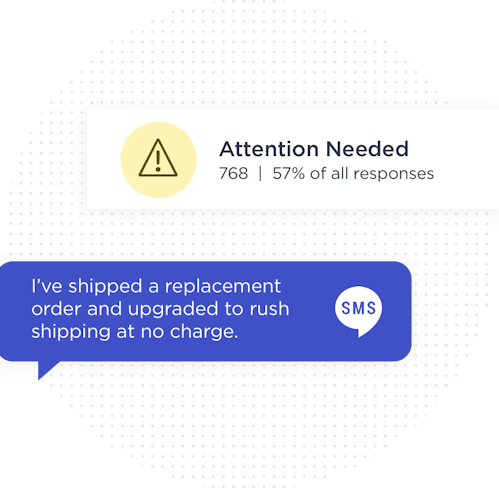

Mobilize employees in the moment. Not after.

Influence experiences as they happen. Get insights to the right people in your organization in real time.

Integrations that tap into the tools your teams use everyday.

Unlock flexibility with out of the box integrations for common systems and powerful, robust APIs and ETLs to harness all your data, work how you want, take action, and transform experiences.